How Prolific detects bots and AI in online research

AI agents are becoming more sophisticated and better at mimicking human behavior. They’re also more accessible than ever. The online research world is currently grappling with the implications of how this affects the authenticity of real human feedback.

We’ve been thinking carefully about how to tackle this and implementing measures throughout this year. From rigorous onboarding and live ID checks to high-precision authenticity detection in studies, we’re focused on catching bots, agentic AI, and participants who use AI tools without consent.

This guide explains how we’re tackling this challenge to ensure you always receive authentic human data from Prolific.

What are the three threats bots and AI pose to online research quality?

When researchers talk about the threat of “bots and AI”, there are three different threats that come up, and each requires different detection approaches.

1. Traditional bots

Bots have existed for years. These automated programs typically reveal themselves through predictable patterns, like:

- Straight-lining survey responses

- Completing studies at inhuman speeds

- Failing basic CAPTCHA tests

They're the easiest threat to detect because they can’t mimic genuine human behavior very well. Learn more about bots.

2. Agentic AI

Agentic AI is a more sophisticated threat. These systems can reason, adapt their behavior, and pursue goals with apparent intentionality. Unlike standard bots, AI agents can adjust their responses based on context and try to appear more human.

You can learn more about agentic AI and how it works in this article.

3. Participants using AI assistance

One of the biggest threats today isn't bots or agents, but human participants who use ChatGPT or similar tools to answer open-ended questions. These participants legitimately join the research pool, but they compromise data quality by using AI to augment their responses instead of providing authentic answers.

AI-augmented answers aren’t always an inherent problem. In some research designs, using AI assistance might be acceptable as long as it’s transparent and aligned with the researcher’s goals. The issue arises when participants use AI in studies that rely on genuine human insight, making the data less reliable and harder to interpret.

Where we need to focus today: humans using AI

While Prolific continues to develop protections against all threats, the current priorities address the main threats that researchers actually encounter in studies right now.

In reality, participants using ChatGPT to answer open-ended questions pose a far more common threat than AI agents. These are real humans who passed all verification checks legitimately but are cutting corners on specific questions. This matters because it shows where to focus detection efforts.

Each threat requires different detection strategies

Prolific's verification systems address all three threats to data quality: bots, agentic AI, and humans using AI.

We also have measures in place to detect these threats at each stage of the participant journey: during onboarding, moving around in-app, and in studies. This ensures a multi-layered defense that’s very difficult to bypass.

How does Prolific verify new participants during onboarding?

Before participants can access any studies, Prolific runs verification checks to catch bots, AI agents, and potential fraudsters. New participants coming off our waitlist complete over 50 verification steps before entering the active research pool.

Onboarding checks that simple bots would fail

Most simple bots struggle with a wide variety of checks built into onboarding. For example, phone number verification and email verification both must happen to pass onboarding. In the onboarding study, things like unusual response patterns, attention check failures, and failure to follow instructions often catch simple bots. Similarly, if a VPN is detected, the participant account won’t be approved.

More advanced agentic AI might slip past checks made for simpler bots, but our further measures tackle those. This broad combination of checks during onboarding keeps the process robust, even as AI tools evolve.

AI detection during onboarding catches bots, AI agents, and participants using LLMs

As part of the 50+ onboarding checks each participant has to pass, we actively assess for AI-like behaviours and content, detecting AI use with 98.7% precision. This means we can reliably catch participants using AI and prevent them from entering the pool.

Identity document verification with a live video comparison catches bots and AI agents

Every new participant completes ID verification that includes a live video selfie. This video liveness technology from our partner Entrust maintains a fraud rate of less than 0.1% and achieves a false acceptance rate of just 0.01% (one in 10,000). The system detects deepfakes, manipulated ID documents, and other fraud attempts that both bots and AI agents would struggle to bypass.

Video liveness checks present a significant barrier for pure AI agents, as they have no physical face to present. This means that it’s currently not possible for an agent to sign up and create a Prolific account without a human present. And that human face must match a real, government-issued ID.

This is the kind of confidence that underpins our 100% Human Guarantee: if any AI agents posing as participants bypass our checks and are detected in your study, we’ll give you twice your money back for those flagged participants. Find out how to qualify.

How does Prolific monitor active participants in-app?

Verification doesn't stop after onboarding. Participants in Prolific's active research pool undergo ongoing monitoring, which catches threats that emerge over time.

Activity monitoring and study access limits deters bots

Prolific monitors participant behavior for patterns that suggest automation. When the system detects unusually high activity (like excessive study refreshes or repeated rapid clicks on study reservations), it temporarily limits that participant's access to studies.

This deters people from using bots to gain unfair advantages in study selection, while encouraging them to wait for Prolific's systematic refreshes.

Periodic identity confirmation catches bots and AI agents

Participants must complete periodic ID confirmation checks that require a new live video selfie, which gets compared against their original identification document. These checks appear without warning and give participants just one hour to complete verification.

Participants who fail these checks face immediate account suspension. Those who miss the deadline must restart the entire 50-check onboarding process, going through things like ID verification, phone number confirmation, and the onboarding study again.

This periodic verification catches account takeovers where bots or AI agents attempt to use legitimate accounts. Even if an AI agent was deployed after initial onboarding, it can't maintain access when faced with unexpected video liveness checks.

Researcher feedback catches bots, AI agents, and participants using LLMs

Every completed study generates researcher feedback that feeds into participant quality scores. When researchers reject submissions for concerning behavior (such as clearly AI-generated responses or completion times three standard deviations below the study mean), these rejections directly impact the participant's ability to access future studies. Quality scores fluctuate based on ongoing performance, and participants who drop below the top quality thresholds lose study access entirely.

This creates a powerful deterrent against using AI assistance or automation. Even if a participant thinks they can use ChatGPT without detection once or twice, the cumulative effect of researcher rejections will quickly exclude them from the research pool. To regain access, they have to return to the waitlist (where only 13% of applicants are approved), pass all verification checks with completely new credentials, and avoid any connection to their previously banned account.

Suspicious activity alerts the team for further investigation

For checks that warrant further investigation but could result in unfair bans, we have extensive behavioral tracking that alerts our team for further investigation. For example, if inconsistent responses in demographic questions, or strange IP activity is detected, flagged participants will be investigated by our team. Similarly, if customers report suspicious participants to us via our feedback form or support team, we look into it.

Every day, we process hundreds of manual checks to make fair decisions about suspicious participant accounts. It’s important to us that we only remove participants who are genuinely bad actors or low quality, not honest participants.

How does Prolific help researchers verify participants in studies?

Once participants leave Prolific and enter external study platforms, we have additional verification measures in place to help you maintain data quality.

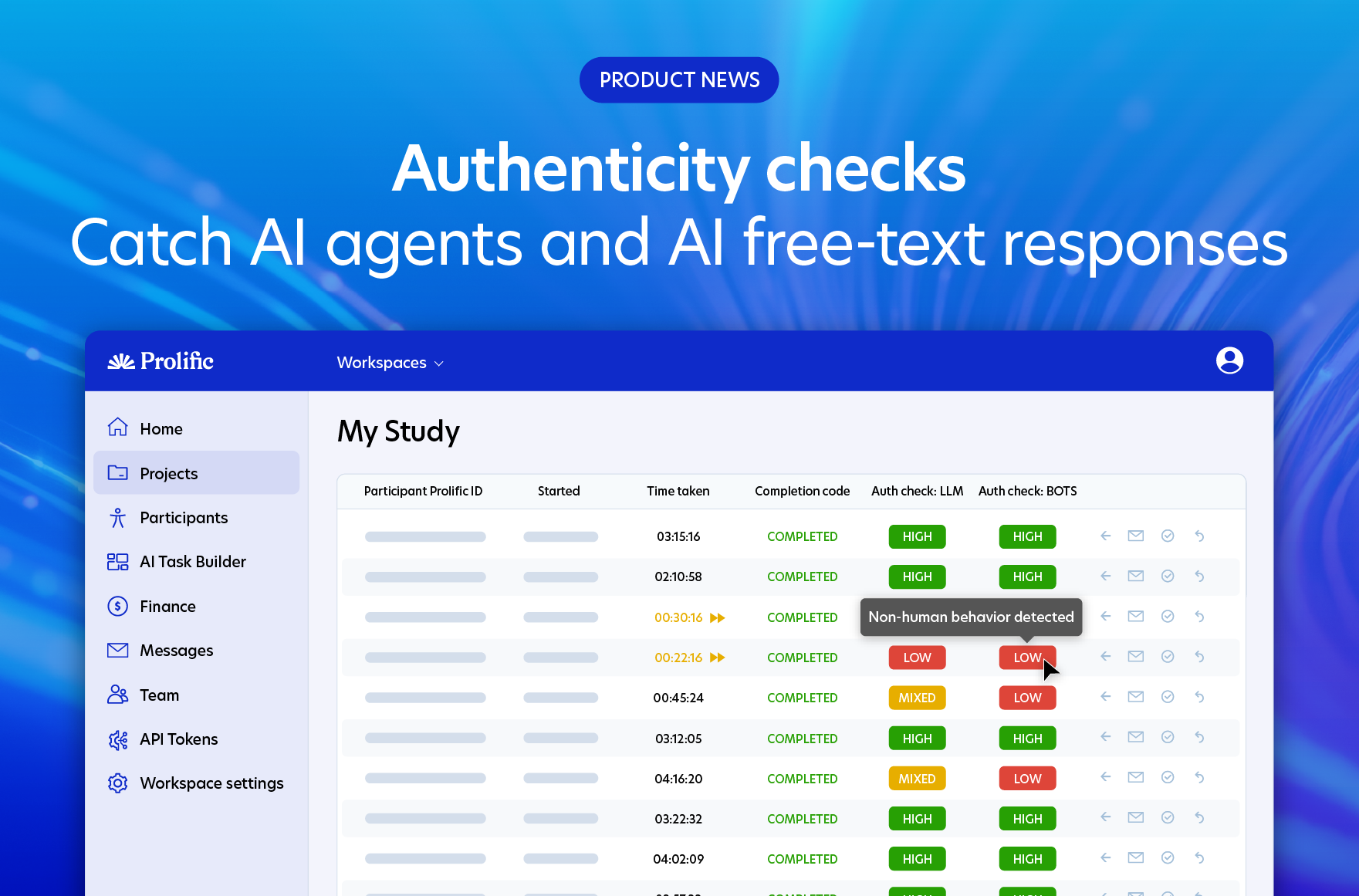

Authenticity checks catch AI agents and participants using LLMs to answer

Prolific offers two kinds of authenticity check that detect AI misuse as participants take studies.

- LLM authenticity checks work with studies in both Qualtrics and Prolific’s AI Task Builder to catch participants using LLMs (like ChatGPT) to generate free-text responses. These checks work on free-text questions with 98.7% precision, by examining over 15 different behavioral patterns suggestive of AI-assisted responses, such as copy-pasting and tab-switching.

- Bot authenticity checks flag AI agents in your study with 100% accuracy in testing. They look for non-human behaviors or automated environments across your whole Qualtrics study, working with any question type. In testing, these were the leading method of detecting AI agents versus various other methods on the market, with a perfect record of separating humans from AI agents.

Researchers can reject submissions flagged as “low authenticity” by authenticity checks. These rejections feed back into the participant’s quality score, with repeat rejections resulting in a ban. The Prolific team will also investigate participants who repeatedly fail authenticity checks, even if researchers don’t reject their responses.

The fact that our Bot authenticity checks work with 100% accuracy is what makes our 100% Human Guarantee possible. If they detect any AI agents in your study, we’ll give you twice your money back for those participants. Learn more.

Exceptionally fast submissions catch bots and low-effort participants

Prolific automatically tracks study completion times and flags submissions that fall far outside attentive, human performance. Researchers can review submissions flagged as “exceptionally fast” and reject them if the speed suggests that no attention has been paid or automation is being used.

These rejections feed back into participant quality scores, creating consequences that discourage future attempts at gaming the system. Again, even if researchers choose not to reject, Prolific still holds a record of how many times a participant has been flagged for exceptionally fast submissions. Participants who repeatedly indicate suspicious or low-quality activity will be flagged to the Prolific team for investigation.

What we’re building next

Prolific is continuously developing additional verification layers that will further strengthen protection against bots, AI agents, and human participants using LLMs. The following features represent the next generation of detection capabilities designed to stay ahead of evolving threats.

This is an evolving space, and we’re staying vigilant. As AI tools advance, we’re committed to ongoing improvement so our systems remain reliable, flexible, and ready for whatever comes next.

Authenticity check upgrades

Prolific is developing enhanced content analysis designed to improve our ability to catch agentic AI and participants who are inputting AI-generated answers. While the current system already catches many instances through behavioral analysis with 98.7% precision, this upgrade will improve accuracy further and pick up more sophisticated AI agents. It draws on the latest research into high-precision AI detection, ensuring we maintain fairness to honest participants.

Device fingerprinting

Device fingerprinting examines multiple technical signals to identify automated environments used by both traditional bots and agentic AI. The system analyzes things like IP addresses, browser configurations, screen resolutions, operating system details, and dozens of other data points that create a unique profile for each device.

Automated systems often reveal themselves through technical configurations that differ from those of typical human users. Bots and AI agents frequently run in cloud environments or virtual machines that leave distinctive fingerprints. By comparing device profiles against patterns associated with automation, this system catches threats that might otherwise appear human through their behavior alone.

Cloudflare Bot Management

Cloudflare Bot Management brings enterprise-grade detection to in-app participant actions. This system monitors activities like study reservations, profile updates, and responses to "about you" questions, determining with high accuracy whether actions originate from automated environments like ChatGPT.

Cloudflare reports that its bot management technology achieves a false positive rate as low as 0.0001%, which means genuine participants face minimal disruption while automated systems are reliably identified. This low false positive rate matters because it maintains fair treatment for honest participants while creating an effective barrier against automation.

Why agentic AI is detectable (and less common than you might think)

Recent research has generated headlines about AI agents that supposedly fool detection systems. The reality is more nuanced. Yes, it's technically possible to create AI agents, but several factors limit how serious a threat they pose in practice.

Creating effective AI agents requires substantial effort

Whilst it’s relatively quick to create a basic AI agent, these low-effort methods are the easiest for Prolific to detect. Creating an agent designed to evade our specific detection systems is genuinely difficult and time-consuming, typically taking weeks to months of development.

The challenge isn’t just building the agent, but understanding what will evade detection without getting banned during testing. Since Prolific doesn’t reveal all detection methods, would-be fraudsters are essentially developing in the dark, risking their account with each test.

Most Prolific participants are honest contributors who want to take part in research responsibly. They’re not looking for ways to manipulate the system, nor do they typically have the technical expertise or the desire to build sophisticated AI-driven tools for fraud. Our verification systems protect the honest majority by identifying the small number of bad actors who do attempt it, keeping the platform fair and trustworthy for everyone.

However, we know that the broader AI landscape continues to evolve, and so will the threats. That’s why we treat this as a moving target and continue to invest in new detection strategies, ensuring our systems stay ahead as new capabilities and threats emerge.

AI agents don’t stand to make more money than honest participants

Prolific has provisions in place that remove most financial incentives for deploying AI agents. Prolific only lets each participant enter one study at a time, and participants face rejection (with no pay) if they complete studies at very fast speeds. This means an AI agent would also have to take one study at a time and answer it at the same pace as human participants. It would earn the same amount as a human participant completing studies normally, while facing much higher risks of detection and permanent exclusion.

Even if an AI agent worked at a human pace but spent 24/7 responding to studies, our systems would detect this.

Compare this to the effort required to create and maintain a truly undetectable AI agent. After lots of development work and ongoing monitoring to avoid detection, the potential earnings match what a person could make by simply participating honestly.

Getting back onto Prolific after being banned is exceptionally difficult

Once a participant's account is banned, the barriers to creating a new account are severe.

First, they'd need to join the waitlist, where only 13% of applicants receive invitations to take onboarding. Even if they managed to secure an invitation, they would need to present themselves as an entirely different person, requiring fresh credentials and documentation across every verification layer.

The combination of competitive access, comprehensive verification requirements, and sophisticated fraud detection makes successful re-entry extremely unlikely.

It’s why we launched our 100% Human Guarantee

These checks and measures genuinely make it difficult for any non-human participant to exist on our platform. That’s why we’re willing to back this up with money through our guarantee: get 100% human participants, or we’ll give you twice your money back per non-human participant flagged. Learn more.

Staying ahead of evolving threats

AI capabilities will continue advancing, and Prolific's detection systems will evolve alongside them. Prolific’s approach balances multiple priorities: catching sophisticated threats before they become widespread problems, maintaining fair treatment of honest participants who deserve trust, and providing researchers with reliable data they can build on with confidence.

Current systems already detect traditional bots, catch most AI agents, and identify participants who use AI to answer questions. Development work on next-generation detection ensures Prolific stays ahead of emerging capabilities while avoiding false positives that would unfairly exclude legitimate participants.

The combination of checks at every stage of the participant journey creates defense in depth, with each layer catching different kinds of threats. Together, the dozens of checks maintain data quality even as both AI capabilities and attempts to misuse them become more sophisticated.

Want to learn more about Prolific's comprehensive data quality measures? Discover how we set the gold standard for authentic human data collection. Ready to get started? Sign up to Prolific today.

Or watch this 90 second video to get a brief overview of our multi-layered approach to data quality as a whole.

Try Prolific today and feel even more confident with our 100% Human Guarantee

If you detect any AI agents in your study that have been missed by our pre-study checks, you’ll get twice your money back for those flagged participants.

Right now, more than a million participant submissions have been checked by our Bot authenticity checks, and only 0.04% have been flagged as AI agents.

To qualify for our guarantee:

- Use Qualtrics and Prolific together

- Set up Bot authenticity checks (read on to find out how)

- Launch your study

- If any participants are flagged as “low authenticity”, we credit you twice your money back for those flagged participants within 21 days